Knowledge graphs address hallucination and data fragmentation issues that no other technology solves as well.

The widely cited finding that 95% of GenAI pilots in enterprises deliver zero measurable return reflects execution failures rooted in poor data integration and contextual grounding. To an extent, these are precisely the problems that knowledge graphs are designed to solve.

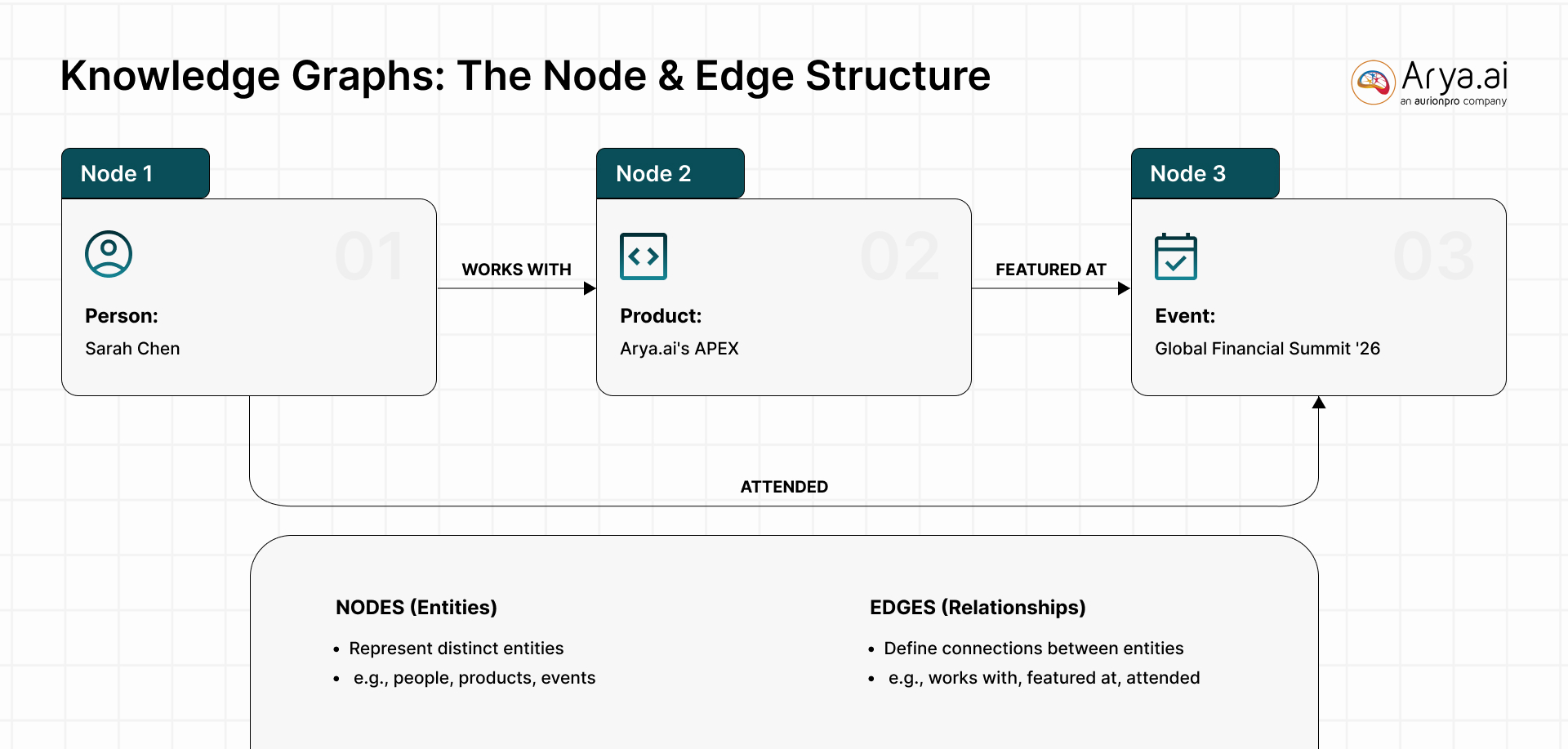

For the uninitiated, knowledge graphs follow a node + edges structure. The nodes represent people, products, events, while the edges show the relationship between them, like works for, owns, located in, bought, caused, etc. The structure acts as a machine-readable map that allows systems to grab the context and semantics.

The Root Causes of Gen AI Failure in Enterprises

- Data quality and integration failures top every list. A study found 43% of CDOs cite data readiness as their primary obstacle. Gartner estimates poor data quality costs organizations $12.9 million per year.

- The core barrier is learning. Most GenAI systems cannot retain feedback, adapt to context, or improve over time. For complex, high-stakes work, 90% of enterprise users still prefer humans because AI "repeats the same mistakes" and lacks institutional memory.

- Organizational dysfunction compounds the problem. BCG's "70-20-10 rule" frames AI success as 70% people and processes, 20% technology, and 10% algorithms. Over half of GenAI budgets flow to sales and marketing, yet the highest ROI comes from back-office automation.

Knowledge Graphs Attack the Exact Failure Modes That Matter the Most in Production

If we give the models verified facts as interconnected entities and relationships, as knowledge graphs do, we can find solutions to the three things that cause Gen AI to fail in enterprises: inaccuracy, data fragmentation, and governance opacity.

Evidence Reinforces the Accuracy Theories

A data.world benchmark tested LLM performance across 43 business questions on enterprise SQL databases and found that adding a knowledge graph improved response accuracy by 3X (300%). On complex questions involving KPIs and strategic planning, LLMs alone scored literally 0%. Knowledge graphs, however, did significantly better.

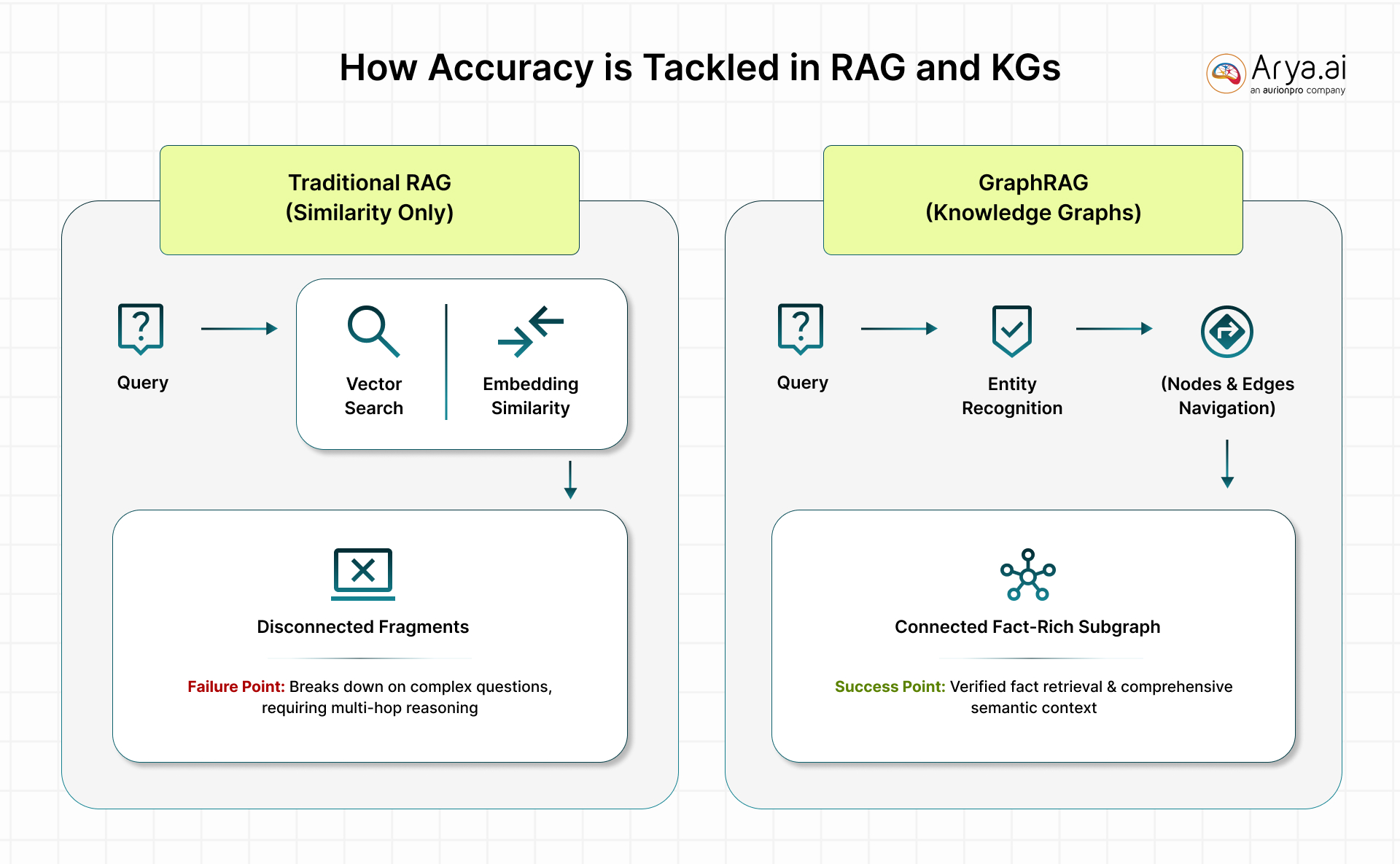

The fundamental mechanism is straightforward: instead of asking an LLM to guess from statistical patterns, GraphRAG retrieves verified facts from structured relationships and uses them to constrain generation.

Traditional vector-based RAG (the most common approach to grounding LLMs) chunks documents into fragments and retrieves them via embedding similarity.

RAG will then break down on multi-hop reasoning that requires connecting information across multiple entities and relationships. GraphRAG augments this with structured graph traversal: a query triggers entity recognition, knowledge graph traversal via Cypher or SPARQL (query languages used to talk to knowledge graphs), subgraph retrieval with contextual relationships, and then LLM generation grounded in verified facts with source attribution.

On Data Integration, Knowledge Graphs Provide a Semantic Unification Layer

This layer addresses the silo problem without requiring physical data migration. An enterprise knowledge graph links concepts across CRM, ERP, support systems, and research databases using shared ontologies (formal representations of what entities exist, what relationships are valid, and what inference rules apply).

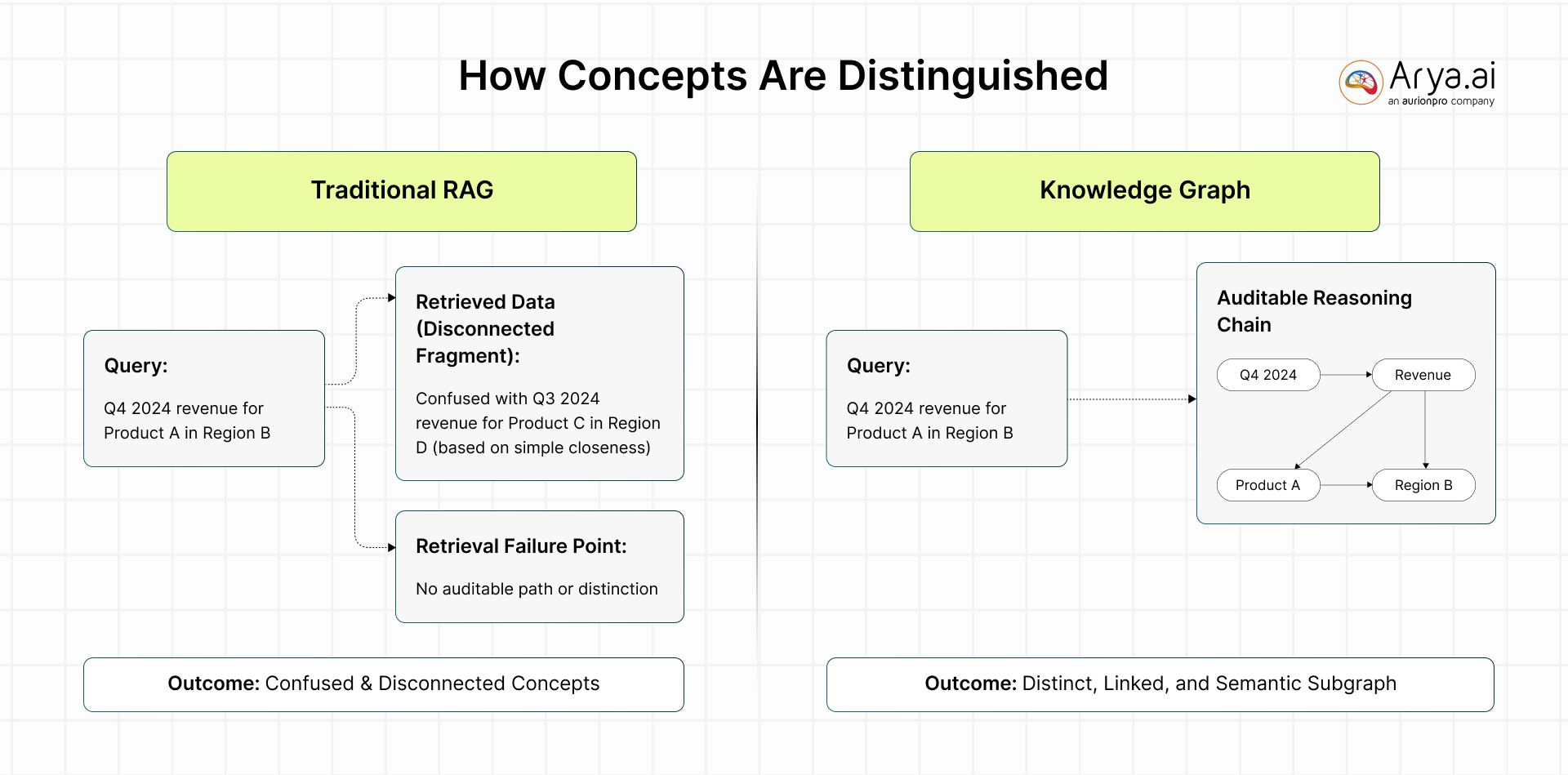

This means "Q4 2024 revenue for Product A in Region B" is understood as distinct from "Q3 2024 revenue for Product C in Region D," rather than being lumped together by vector similarity.

On Governance and Explainability, Knowledge Graphs Offer Auditability

Presently, knowledge graphs offer auditable reasoning chains that no other approach can. Every AI response grounded in a knowledge graph includes a traceable path from conclusion back to source facts. You can trace it node by node, relationship by relationship.

In strict industries like financial services, where auditability is a regulatory requirement, this becomes highly crucial.

Knowledge graphs attach access permissions to entities, enforcing data governance at the query layer rather than relying on application-level controls.

The Honest Case Against Knowledge Graphs as a Universal Solution

Three significant constraints limit knowledge graphs as an enterprise GenAI strategy.

The Cost

GraphRAG implementations cost 3–5X more than baseline RAG. Building enterprise knowledge graphs requires extensive ontology design, domain expertise, and ongoing curation that traditional RAG avoids entirely. If your organization can support the sufficient scale and the use case demands it, you will likely see great returns.

Not Everyone’s Go-To

Adoption is essentially flat despite the hype. BARC Research data from late 2025 shows that among AI adopters, 27% had knowledge graphs in production, compared with 26% in early 2024. More concerning, earlier-stage evaluations and proof-of-concepts actually declined, suggesting the pipeline for new knowledge graph implementations has slowed.

Opting for Simple Alternatives: Easy Way Out

Advanced RAG techniques deliver adequate results for straightforward document Q&A, content summarization, and single-domain search. This is at a fraction of the cost and complexity. However, you cannot get away with this in sensitive industries, like financial and healthcare.

Scarce Expertise

Production KG development requires ontology engineering, graph query languages (SPARQL or Cypher), graph database administration, NLP pipeline management, and deep domain knowledge. This is a combination exceptionally available in the market. Building knowledge graphs is rarely successful, and even if it is successful at first, it is rarely sustainable.

A Three-tier Framework for Choosing the Right Approach

The decision to invest in knowledge graphs should follow a graduated assessment based on use case complexity, accuracy requirements, regulatory exposure, and data landscape. Not every enterprise GenAI problem warrants graph infrastructure, but certain problem profiles make knowledge graphs effectively irreplaceable.

Tier 1: Advanced RAG (weeks to deploy, lowest cost).

This is the right starting point when use cases involve simple document Q&A, content search, and summarization, or single-domain knowledge bases with moderate accuracy tolerance. Hybrid search, reranking, and chunking optimization deliver meaningful improvements over naive RAG at minimal incremental cost. Organizations should exhaust these techniques before moving to graph-based approaches.

Tier 2: Lightweight GraphRAG (1–3 months, moderate investment).

When queries require multi-hop reasoning across documents, some relationship awareness, or improved accuracy beyond the ~80% ceiling of vector-only RAG, a hybrid approach combining vector embeddings with auto-generated graph structures makes sense. This tier suits organizations with growing accuracy demands but without the scale or regulatory pressure to justify full enterprise knowledge graphs.

Tier 3: Full Enterprise Knowledge Graph (3–12+ months, strategic investment).

This tier is warranted when five conditions converge:

- Complex multi-hop reasoning across heterogeneous data domains is a core requirement

- Regulatory or compliance mandates demand explainable, auditable AI reasoning chains

- Data integration across enterprise silos (CRM, ERP, research, support) is essential to the use case

- Accuracy requirements exceed 95%, and hallucination tolerance is near zero

- Multiple AI use cases will leverage the same knowledge infrastructure, amortizing the investment

Industries where Tier 3 investment is most defensible include financial services compliance (fraud detection, KYC/AML, where graph structures naturally model transaction networks), healthcare decision support (where patient safety demands verified reasoning), and complex manufacturing (supply chain traceability, predictive maintenance across interconnected systems).

What Decision Makers Should Do!

The Enterprise GenAI failure crisis is fundamentally a data grounding and integration crisis. LLMs are powerful enough. The missing layer is a structured, verified, relationship-rich context that transforms statistical generation into reliable enterprise reasoning.

But "appropriate contexts" is the critical qualifier. The honest assessment is that knowledge graphs are the right answer for the right industry: the high-complexity, high-stakes, regulated, multi-domain problems where accuracy and explainability are non-negotiable.

The strategic insight for decision-makers is this: Knowledge graphs are an infrastructure that enables Gen AI to work at an enterprise-grade. Organizations that build knowledge graphs create a durable strategic asset: a machine-readable corporate memory that serves multiple AI systems, preserves institutional knowledge explicitly rather than burying it in model weights, and provides the governance backbone that regulated industries require.

The question is not whether to adopt knowledge graphs eventually, but whether the current use case portfolio justifies the investment. This, for financial institutions, is non-negotiable.

To know more, connect with us here.

.png)

.png)

.png)

.svg)